George Rebane

Some readers may have become aware of my involvement with and amazement of Bayesian probability. Sadly, most RR readers couldn’t care less, and therefore have no idea of the impact that Bayesian inference plays in their daily lives. I admit that as a techie, my Bayesian bias started early in my career when I learned to design Kalman filters for Uncle Sam’s military systems. From there I became more immersed in probabilistic models, especially as applied in AI, all powered by Bayes. Today Bayesian inference is supremely (yes, SUPREMELY!) important in all kinds of systems, devices, social enterprises, and legal proceedings. Why? Simply because it is the basis for ALL the correct ways to update current knowledge with new information and data. If you don’t change your mind based on what the Rev Bayes taught, then you’re not doing it right.

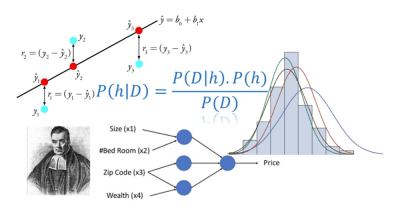

The newest technology buzz to evoke smidgens of lay interest revolves around algorithms developed through the application of machine learning that are based on deep neural nets, which, you guessed it, are based on Bayesian probabilistics. The net that the good reverend casts today even collects the humble and long-known least squares estimation techniques, many of which can be derived by simple application of the calculus. But even least squares derives its ubiquitous power from its Bayesian tap root.

I just came across a gem of a very short paper – ‘Where did the least square come from?’ – that gets two birds with one stone. In there, author Tirthajoty Sarkar (another goddam immigrant 😉) gives a lucid explanation of how Bayes founds least-squares, a well-known estimation technique that forms a key optimizing computation in the guts of deep neural nets, an approach to machine learning which today is used to derive new and awesome capabilities from large datasets that are completely beyond the ken of man (and woman). If you are numerate, you should really give this little paper a read.

I just came across a gem of a very short paper – ‘Where did the least square come from?’ – that gets two birds with one stone. In there, author Tirthajoty Sarkar (another goddam immigrant 😉) gives a lucid explanation of how Bayes founds least-squares, a well-known estimation technique that forms a key optimizing computation in the guts of deep neural nets, an approach to machine learning which today is used to derive new and awesome capabilities from large datasets that are completely beyond the ken of man (and woman). If you are numerate, you should really give this little paper a read.

Leave a comment